|

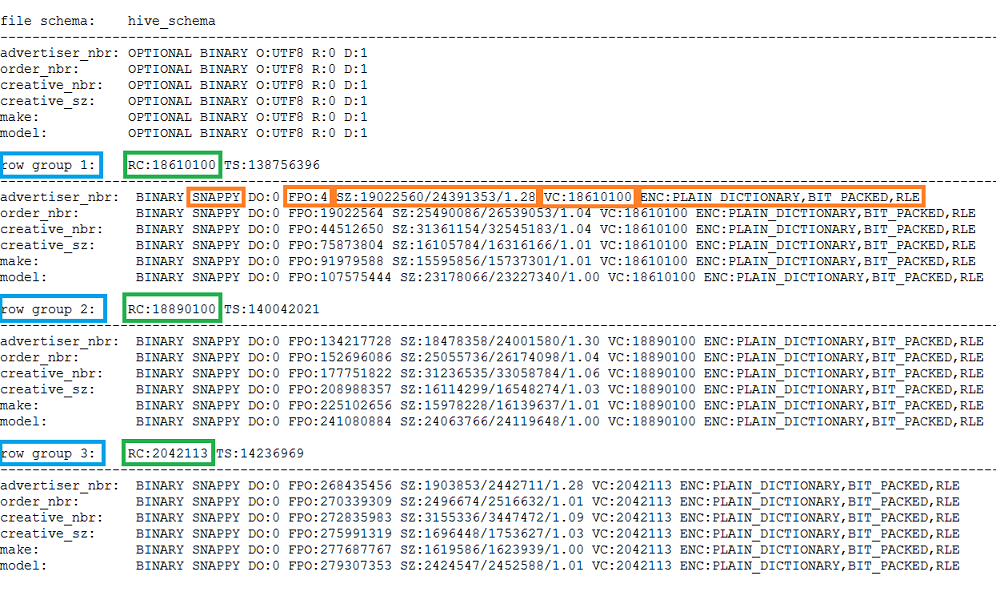

Parquet is growing in popularity as a format in the big data world as it allows for faster query run time, it is smaller in size and requires fewer data to be scanned compared to formats such as CSV. It returns the number of rows in September 2018 without specifying a schema.Parquet is an open-sourced columnar storage format created by the Apache software foundation. The following sample shows the automatic schema inference capabilities for Parquet files. To explicitly specify the schema, please use OPENROWSET WITH clause. This can mean that some of the columns expected are omitted, all because the file used by the service to define the schema did not contain these columns. Have in mind that if you are reading number of files at once, the schema, column names and data types will be inferred from the first file service gets from the storage. Column names and data types are automatically read from Parquet files.

You don't need to use the OPENROWSET WITH clause when reading Parquet files. SELECTīULK 'puYear=2018/puMonth=*/*.snappy.parquet', You can specify only the columns of interest when you query Parquet files. Examples in this article show the specifics of reading Parquet files. The only difference is that the FILEFORMAT parameter should be set to PARQUET. You can query Parquet files the same way you read CSV files. NYC Yellow Taxi dataset is used in this sample. This setup script will create the data sources, database scoped credentials, and external file formats that are used in these samples. Then initialize the objects by executing setup script on that database. Your first step is to create a database with a datasource that references NYC Yellow Taxi storage account. In the following sections, you can see how to query various types of PARQUET files. Geo_id varchar(6) collate Latin1_General_100_BIN2_UTF8 You can easily set collation on the colum types, for example: You can easily change default collation of the current database using the following T-SQL statement: Mismatch between text encoding in the file and string column collation might cause unexpected conversion errors. Make sure that you are explicilty specifying some UTF-8 collation (for example Latin1_General_100_BIN2_UTF8) for all string columns in WITH clause or set some UTF-8 collation at database level. ) with ( date_rep date, cases int, geo_id varchar(6) ) as rows OPENROWSET enables you to explicitly specify what columns you want to read from the file using WITH clause: select top 10 * If a data source is protected with SAS key or custom identity, you can configure data source with database scoped credential. As an alternative, you can create an external data source with the location that points to the root folder of the storage, and use that data source and the relative path to the file in OPENROWSET function: create external data source covid

Previous example uses full path to the file. The downside is that you lose fine-grained comparison rules like case insensitivity. The Latin1_General_100_BIN2_UTF8 collation has additional performance optimization that works only for parquet and Cosmos DB. If you use other collations, all data from the parquet files will be loaded into Synapse SQL and the filtering is happening within the SQL process. The SQL pool is able to eliminate some parts of the parquet files that will not contain data needed in the queries (file/column-segment pruning). The Latin1_General_100_BIN2_UTF8 collation is compatible with parquet string sorting rules. If you use the Latin1_General_100_BIN2_UTF8 collation you will get an additional performance boost compared to the other collations.

You can easily change the default collation of the current database using the following T-SQL statement:ĪLTER DATABASE CURRENT COLLATE Latin1_General_100_BIN2_UTF8 įor more information on collations, see Collation types supported for Synapse SQL. Ensure you are using a UTF-8 database collation (for example Latin1_General_100_BIN2_UTF8) because string values in PARQUET files are encoded using UTF-8 encoding.Ī mismatch between the text encoding in the PARQUET file and the collation may cause unexpected conversion errors.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed